Table of Contents

Monte Carlo Tree Search

This class continues topic introduced in the previous lab. This time we will focus on the famous Monte Carlo Tree Search (MCTS). While MiniMax with alpha/beta pruning uses branch and bound approach to search the game tree exhaustively, some game trees are just too big to be explored, e.g. Go game).

MCTS on the other hand is a probabilistic algorithm, only sampling the game tree in the indeterministic manner.

Related info:

Monte Carlo

The Monte Carlo algorithms are named after the famous casions, where you can play the 'one-armed bandit' slot machines. The idea is that one has many different slot machines to choose from in the casino and every machine has different unknown winning chance. Problem to solve: having a fixed amount of game tokens, how to find a machine that maximizes profits?

The Monte Carlo algorithms are named after the famous casions, where you can play the 'one-armed bandit' slot machines. The idea is that one has many different slot machines to choose from in the casino and every machine has different unknown winning chance. Problem to solve: having a fixed amount of game tokens, how to find a machine that maximizes profits?

In games a “slot-machine” is represented by a possible move with unknown impact on the game, and we have to find the best move, having limited amount of computational resources (tokens). The simplest approach would be to choose moves at random and simulate the game (such simulation is called playout) until the end. We do it as long as we can, then we check which move had the best wins to playouts ratio.

as long as we can: move = choose_random_move() score = payout(move) save_score(move) calculate_average_win_ratio() choose_move_with_the_best_ratio()

Assignments

- check the basic Monte Carlo implementation

SampleMonteCarloGamer.java - run several matches Alpha-Beta vs Monte Carlo:

- at some simple game, e.g. tic-tac-toe,

- at something bigger, e.g. checkers

- make sure that neither of them times out without making a move

Monte Carlo Tree Search

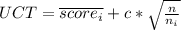

Monte Carlo wasn't the smartest approach to the problem - we can be more intelligent than some random sampling. The idea behind a more advanced Monte Carlo Tree Search is to choose moves, according to a simple scoring formula:

where:

the average score of the

the average score of the  -th move

-th move is total number of playouts

is total number of playouts is number of playouts after choosing the

is number of playouts after choosing the  -th move

-th move is a constant parameter, from theoretical reasons set traditionally to

is a constant parameter, from theoretical reasons set traditionally to

As you see, the UCT assigns high scores to machines that:

- have high average score (

is high)

is high) - haven't been tried too many times (low

).

).

This way we balance both exploration and exploitation of the search space. The algorithm is called Tree Search because in essence it explores tree in a intelligent manner — you can say that it approximates MiniMax by randomized exploration.

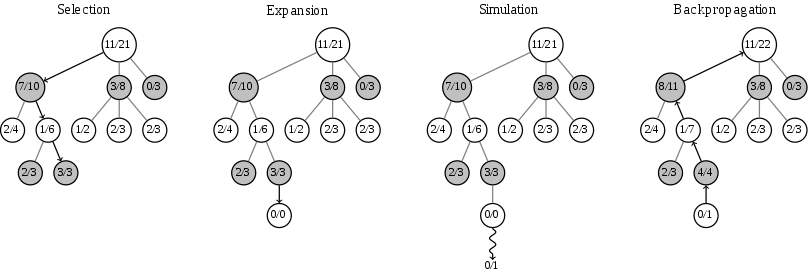

The algorithm always starts in the current game state (root of the tree) and consists in four phases:

- selection:

- if the node has been explored (all possible moves were tried at least once) we use the UCT formula to choose one of them to get another node. We repeat this process until we get a node, that has at least one previously unplayed move.

ATTENTION/ACHTUNG/UWAGA

ATTENTION/ACHTUNG/UWAGA  like in the MiniMax algorithm, we assume that we play zero-sum game and our opponent would like to minimize our score. So when selecting his moves we have to be smart enough to either minimize the UCT score, or store the scores in an intelligent manner.

like in the MiniMax algorithm, we assume that we play zero-sum game and our opponent would like to minimize our score. So when selecting his moves we have to be smart enough to either minimize the UCT score, or store the scores in an intelligent manner.

- growth: we choose one of the unplayed moves (some implementation can choose more, e.g. all unplayed moves from the current node)

- simulation: we make a playout starting with the chosen move. Most basic way to implement playout is to do totally random game simulation.

- backprogation: we store results of the playout in the nodes belonging to the tree branch we have explored.

We repeat those phases as long as we can - finally we select a move that had the highest number of playouts - we know it's the best because algorithm favors the most promising moves when sampling.

Below you can see illustration of one MCTS cycle. There are two things worth of noting:

- this is not first cycle of the algorithm, the tree is already expanded;

- gray moves belong to our opponent, so during backpropagation we store our loss as his win.

Assignments

- Based on the

SampleMonteCarloGamer.java, implement theSampleMCTSGamer.javaplayer- MCTS assumes that won games has score 1, not 100 as in the ggp framework. Tie also should be equal 0,5.

- Run several matches

SampleMCTSGamer.javavsSampleMonteCarloGamer.javaat some big game (chess, checkers?). Does any of them have an upper hand? - Repeat experiment with diffrent

values: 0, 1, 2, 5, 42. Which is the most effective?

values: 0, 1, 2, 5, 42. Which is the most effective? - Modify MCTS by mixing it with the plain Monte Carlo. First run some random playouts (Monte Carlo) for a limited amount of time (e.g. 20% of the total time given for the deliberation). Does it improve results?