This is an old revision of the document!

Classical Game Theory Algorithms

This class introduces classical game theory algorithms for zero sum games with alternate moves. “Zero sum” means that one player's victory is at the same time a defeat for the other player (sum of the rewards/penalties is equal zero). All presented algorithms will based on so called "game tree" representation.

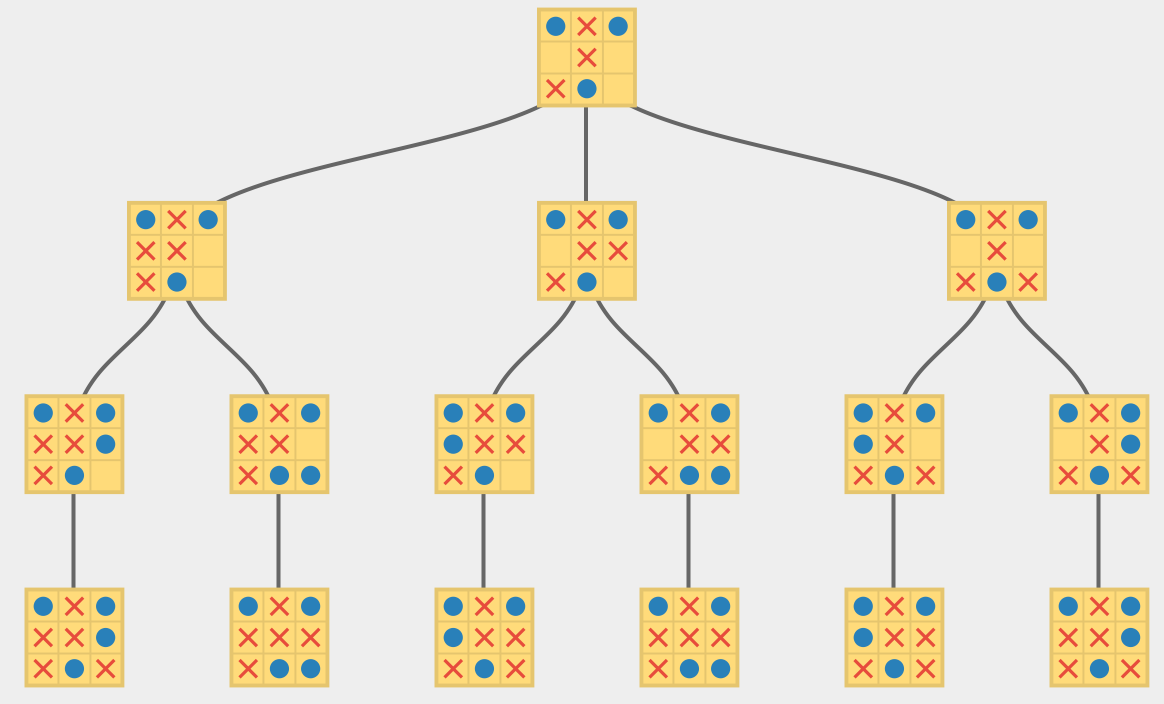

Game tree, is (as name suggests) a directed acyclic graph where nodes represent possible game states, root is the initial state and edges are possible players' move changing the game state to another.

Below you can see a game tree of the 'tic-tac-toe' game, starting three moves before the ending.

We will use the 'ggp-base' framework, introduced together with the gdl language.

First Legal Move

The simplest strategy is to perform the first legal move:

move = first_legal_move()

perform(move)

This complicated algorithm is implementd in the ggp-base, in org.ggp.base.player.gamer.statemachine.sample.SampleAlphabetGamer.java class. The bot sorts the legal moves lexically, then performs the first one.

The few things should catch our attention:

Testing

Testing of the algorithm can be done in two ways:

via the Kiosk app (org.ggp.base.apps.kiosk), introduced on the previous class. We can select the bot there and play with it a sparing.

via the GameServer (org.ggp.base.app.server) and Player (org.ggp.base.app.player) apps:

run the GameServer and select a game

run the Player app and create two players (Create button, you can also select player's type)

start new match in the GameServer app

Assignments

based on the SampleAlphabetGamer.java create a SampleRandomGamer.java bot, that performs random action.

run tic-tac-toe matchSampleAlphabetGamer.java vs SampleRandomGamer.java

Two-moves Lookahead

The more complicated algorithms search the game tree to find a better move. The strategy looking two moves ahead, could be:

Or:

current_move_candidate = null

for every possible move:

if is a winning move:

choose the move

if is a losing move:

forget about it

if is worse than the current_move_candidate

forget about it

simulate performing the move and for every opponent's move after that:

if the move is a losing move (for you):

forget about it

current_move_candidate = current_move

perform(current_move_candidate)

This strategy is implemented in the SampleSearchLightGamer.java class.

It's worth to notice few things:

algorithms stops work after the timeou

you can simulate the move via: getNextState which takes as arguments state and all the players' moves. Thanks to the empty (noop) move, we can get the move via the getRandomJointMove method.

isTerminal(state);

checks if the state is teminal

getGoal(state, role);

returns the result of the game for the player. In the terminal states 100 means victory; 0, defeat. The intermediate values (e.g. 50) represent ties. In some games we can check this value to check which player is in a better situation.

Assignments

MiniMax

The MiniMax algorithm is a classical game-theory algorithms which results in an optimal move (as long as we have enough time to run it to the end).

It assumes, that our opponent will always perform the best move (for him). The MiniMax player always chooses a move that minimizes our loss.

To learn more check the links below:

Please make sure that you understant the algorithm.

Ćwiczenia

Create a MiniMax player based on the code below:

public class SampleMiniMaxGamer extends SampleGamer {

// our role's index

Integer roleIndex = 0;

@Override

public Move stateMachineSelectMove(long timeout)

throws TransitionDefinitionException, MoveDefinitionException,

GoalDefinitionException {

long start = System.currentTimeMillis();

// find our player's index

roleIndex = getStateMachine().getRoleIndices().get(getRole());

// find the best move

Move selection = getBestMove(getCurrentState());

// just to satisfy the ggp interface, not important

long stop = System.currentTimeMillis();

List<Move> moves = getStateMachine().getLegalMoves(getCurrentState(), getRole());

notifyObservers(new GamerSelectedMoveEvent(moves, selection, stop - start));

return selection;

}

// finds the best move using the MiniMax algorithm

private Move getBestMove(MachineState state) throws MoveDefinitionException, TransitionDefinitionException, GoalDefinitionException{

List<List<Move>> moves = getStateMachine().getLegalJointMoves(state);

Integer score = 0;

Move bestMove = moves.get(0).get(roleIndex);

for(List<Move> move : moves){

Integer result = getMinScore(move, getCurrentState());

if (result > score){

score = result;

bestMove = move.get(roleIndex);

}

}

return bestMove;

}

private Integer getMinScore(List<Move> jointMove, MachineState state) throws MoveDefinitionException, TransitionDefinitionException, GoalDefinitionException {

// TODO:

// 1) find a state after the move

// 2) if the state is terminal, return result

// 3) check all the possible moves

// 4) find a move that gives as the minimum reward in the end

// 5) return the best reward we can achieve from the resulting state

return 0;

}

private Integer getMaxScore(List<Move> jointMove, MachineState state) throws MoveDefinitionException, TransitionDefinitionException, GoalDefinitionException {

// TODO:

// 1) find a state after the move

// 2) if the state is terminal, return result

// 3) check all the possible moves

// 4) find a move that gives as the highest reward in the end

// 5) return the best reward we can achieve from the resulting state

return 100;

}

}

test if the MiniMax wins with other bots in 'tic-tac-toe'

the same with 'checkers'

implement timeout handling

test the 'checkers' again

bonus assignments

add possibility to cinstraint the search depth (several moves look-ahead) — it's a classical method to scale the AI power. Why this method may be better than the timeout?

-

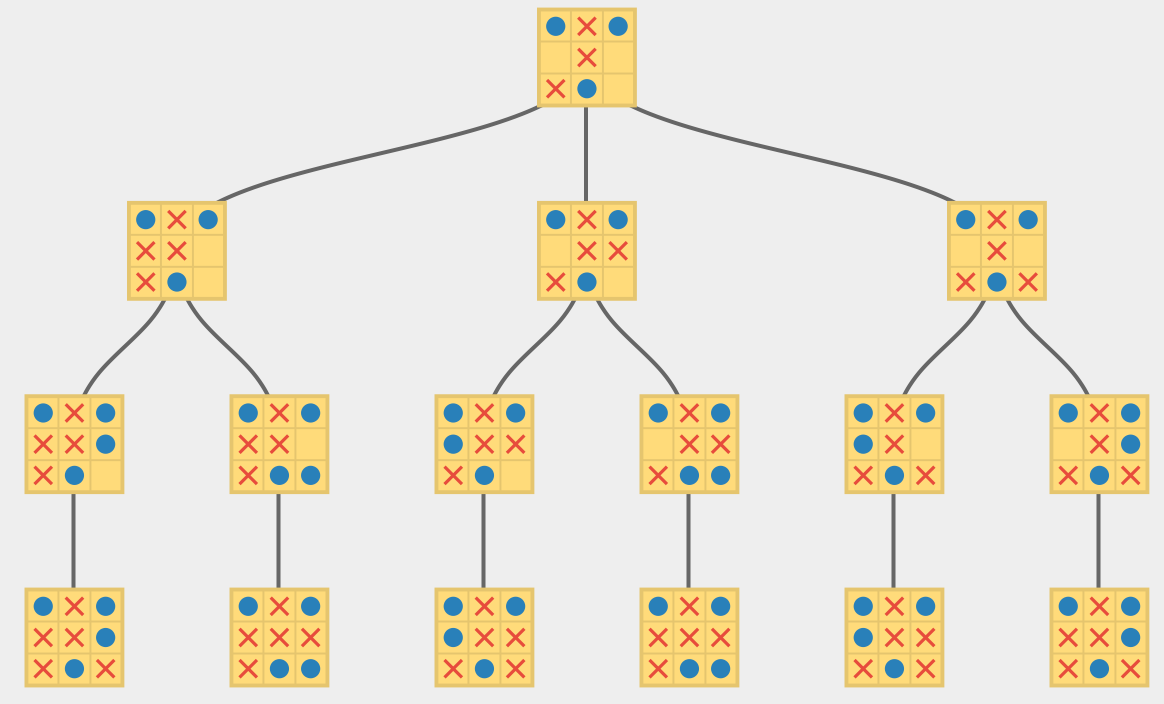

Alpha-Beta Pruning

The MiniMax algorithm search all the tree's nodes which is unnecessary. To avoid it we can use so called Alpha-Beta cuts. The idea is that MiniMax doesn't have to check nodes, which are known to not have the better result than one we already have.

The MiniMax algorithm search all the tree's nodes which is unnecessary. To avoid it we can use so called Alpha-Beta cuts. The idea is that MiniMax doesn't have to check nodes, which are known to not have the better result than one we already have.

Ending of the vidoes shows an example of Alpha-Beta pruning.

Proszę się upewnić, że rozumieją Państwo działanie cięć Alpha-Beta. Należą one do szerokiej rodziny algorytmów branch and bound, używanych w szczególności w optymalizacji.

Ćwiczenia

Proszę zaimplementować gracza SampleAlphaBetaGamer.java bazującego na graczu MiniMax z ograniczeniem czasowym.

Proszę przeprowadzić serię pojedynków SampleAlphaBetaGamer.java vs SampleMiniMaxGamer.java w warcaby lub podobną grę. Czy po kilku rozgrywkach widać jakąś przewagę nowego bota?